This presentation requires a desktop browser

The infinite canvas used by this presentation doesn't play nicely with mobile devices.

Please revisit on a laptop or desktop for the full experience.

The infinite canvas used by this presentation doesn't play nicely with mobile devices.

Please revisit on a laptop or desktop for the full experience.

SWE/SRE Engineering Manager 15 years.

@hatchman76

|

We cannot hire like we used to. We have to change. |

|

LLMs and AI-enabled tooling have significant productivity value. |

|

Hollowing-out of SRE Expertise is a material risk to any business. |

|

Enshittifying Complex Systems is going to hurt us all. |

|

|

Anything AI! LLM, Agent etc... |

|

|

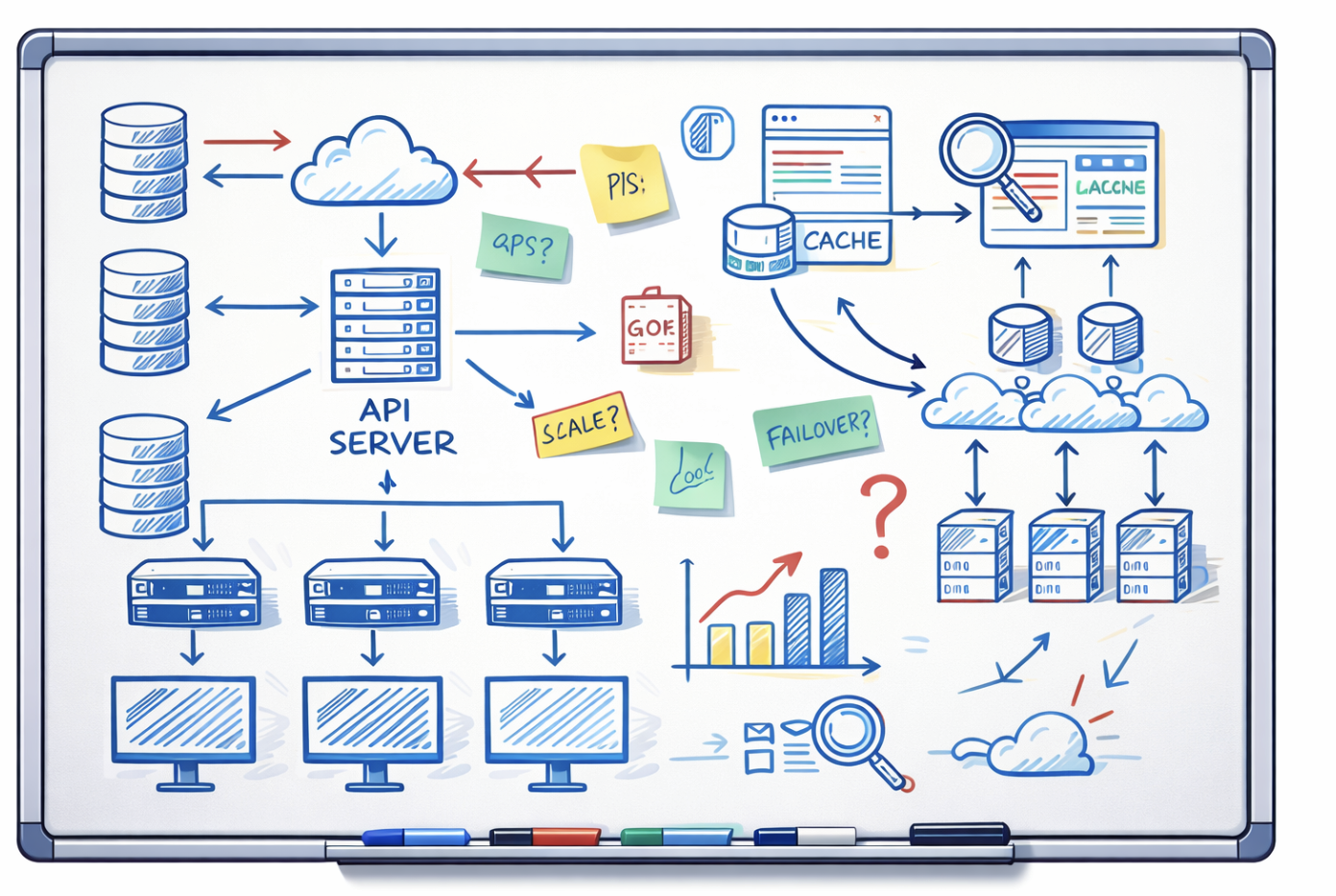

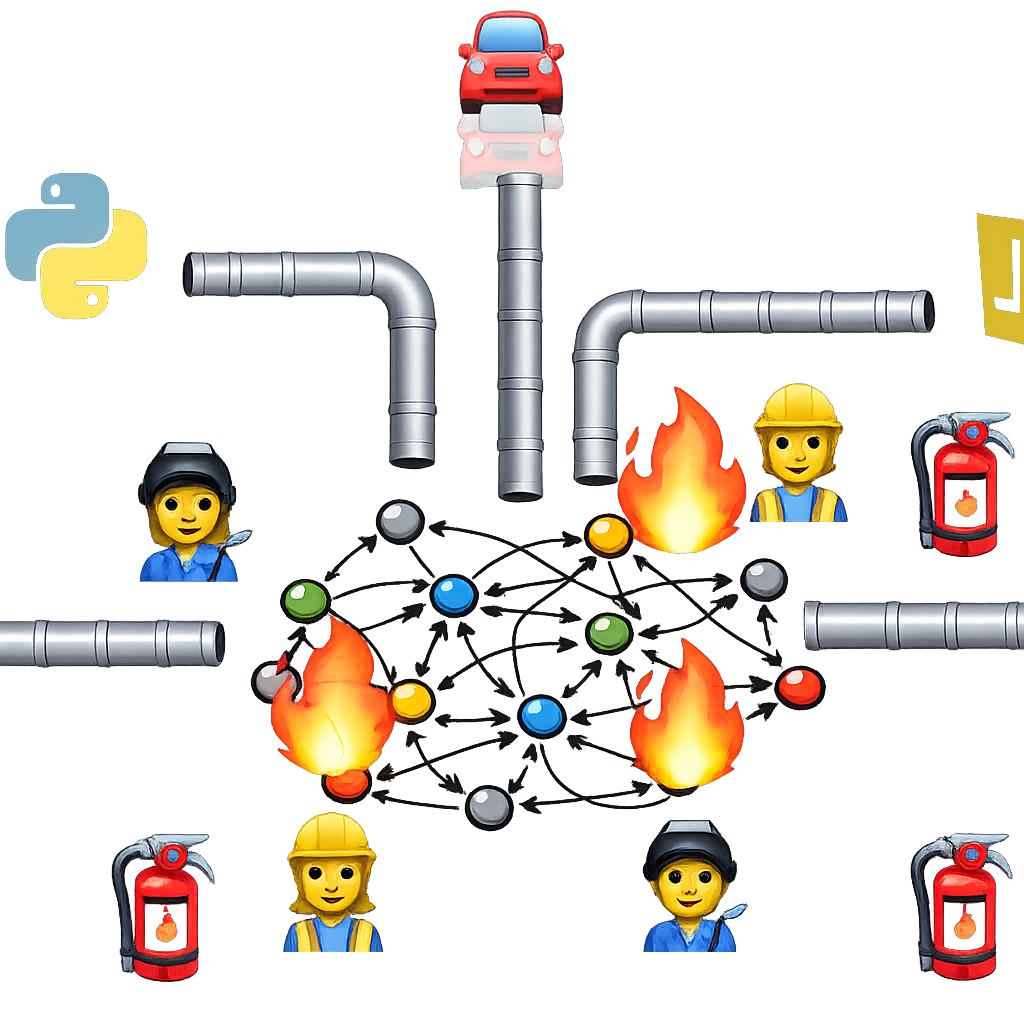

any complex distributed software system |

Almost always in person.

~100

Recruiter Screen

SRE Mindset

Management & Leadership

15 hours

Apache Web Server Diagnosis

Code challenge

Code refactor

Lunch!

Management and Leadership

System Design

2 - 3 days

Please share your ideas in Slack!

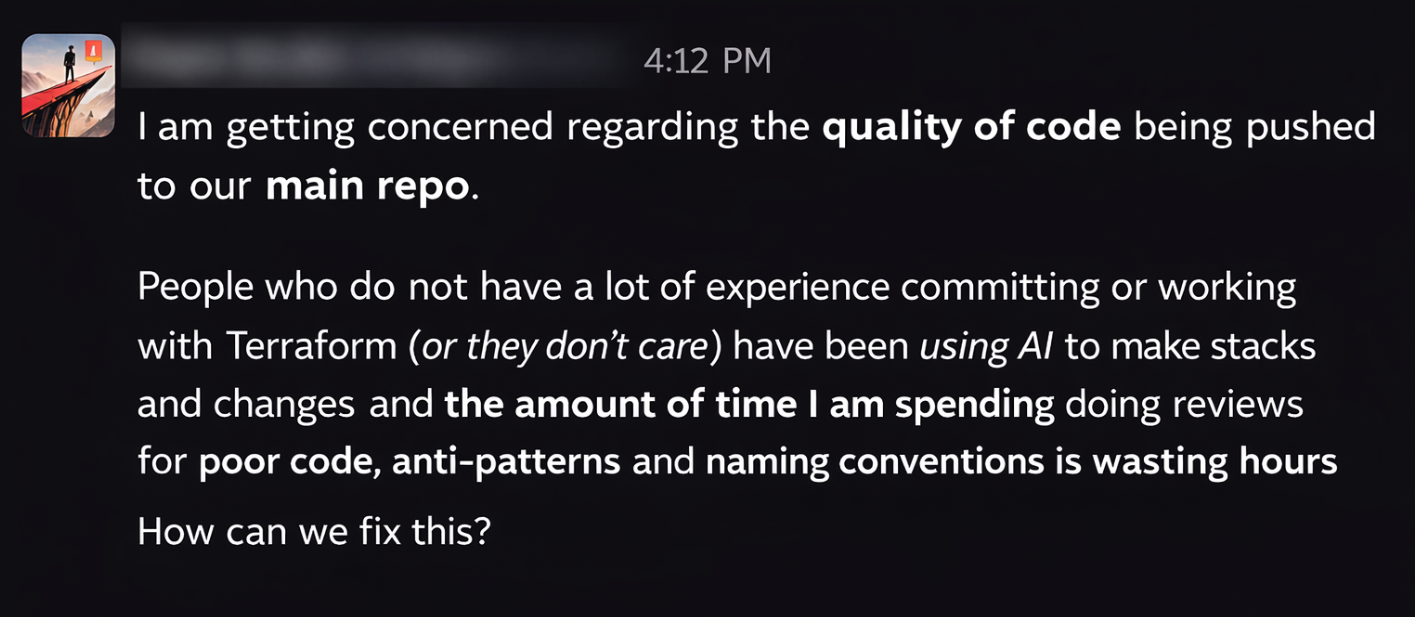

Top down pressure to use AI.

No-one wants to be left behind.

Tell candidates not to use it. Then demand they do on Day 1.

Invest hard to hire for expertise, only to engineer it out of the role?

|

Creativity? |

|

Innovation? |

|

Critical Thinking? |

We don't just hire for raw tech skills.

Printing Press

~1440

Spinning Jenny

~1764

Arc Welder

~1885

Power Mitre Saw

~1964

GPS Navigation

~1990s

stochastic

dynamic

emergent

unstable

non-linear

heterogeneous

evolving

context-sensitive

and many more...

5 Whys

Occam's Razor

William of Ockham

1287 – 1347

Occam's razor is a problem-solving principle stating that when faced with competing explanations for the same phenomenon, the simplest one—requiring the fewest assumptions—is usually the best. It is a mental tool used to cut through unnecessary complexity, not a guarantee of truth.

But what if something could reduce this reliance?

Remember me?

I wrote a book once in 1911!

|

|

| Centralize tacit worker knowledge into prescriptive procedures. | Centralize tacit engineering knowledge into prescriptive LLM prompts. |

| Hire cheaper workers to "follow the script". | Hire cheaper "prompt jockeys" to follow them. |

This works when processes are repeatable and linear...

...but only if you don't care about worker engagement, happiness and innovation!

And haven't been for decades.

the speed at which it can generate beliefs; and

the plausibility of those beliefs.

The cost?

the distance between apparent certainty and actual understanding will grow continuously.

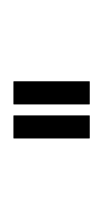

"...GPT Base helped students solve about 48% more practice problems correctly, but when the exam came without AI, those same students scored 17% worse than the kids who never used AI at all..."

https://knowledge.wharton.upenn.edu/article/without-guardrails-generative-ai-can-harm-education

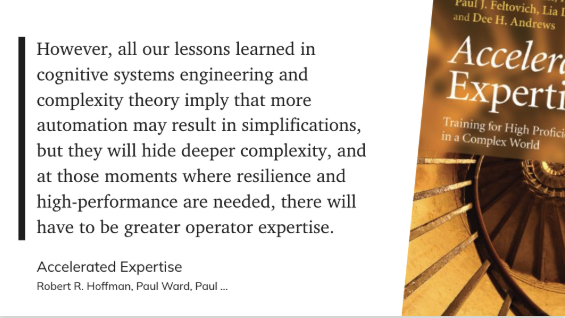

Hoffman et al. (2014). Accelerated Expertise.

Novices vs experts need different conditions:

What helps experts possessing learned causal models, will hurt novices who still need to build mental models.

Desirable difficulty:

Training that's too easy only produces shallow learning.

Scenario-based practice in messy, real contexts:

Exposure to realistic, varied situations and feedback, not just perfectly guided walkthroughs.

Experts seek out corrective feedback:

They want to be told where they're wrong; they don't want a tool that just makes them look right.

"Adults learn most of what they use at work or at leisure, while at work or leisure. Most of what is taught in classroom settings is forgotten, and much of what is remembered is

irrelevant."

- Russell Ackoff

Understanding comes through trial and error, adaptation at the edge, testing.

This is the fundamental mindset of good SREs.

This is the capability we hire for and must cultivate on the job.

When does AI stop being a tool?

And become the Operator?

SREs?

or....

And who has the expertise to fix it now?

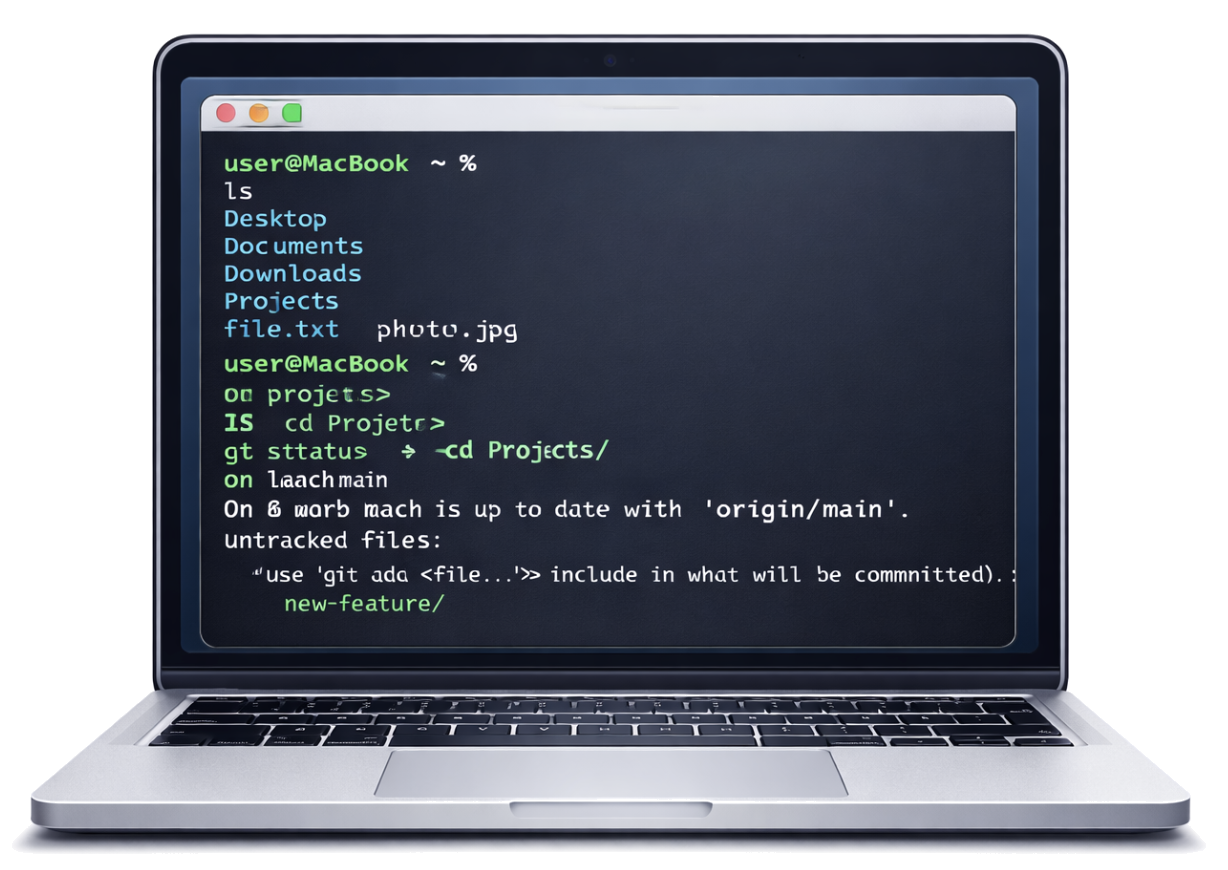

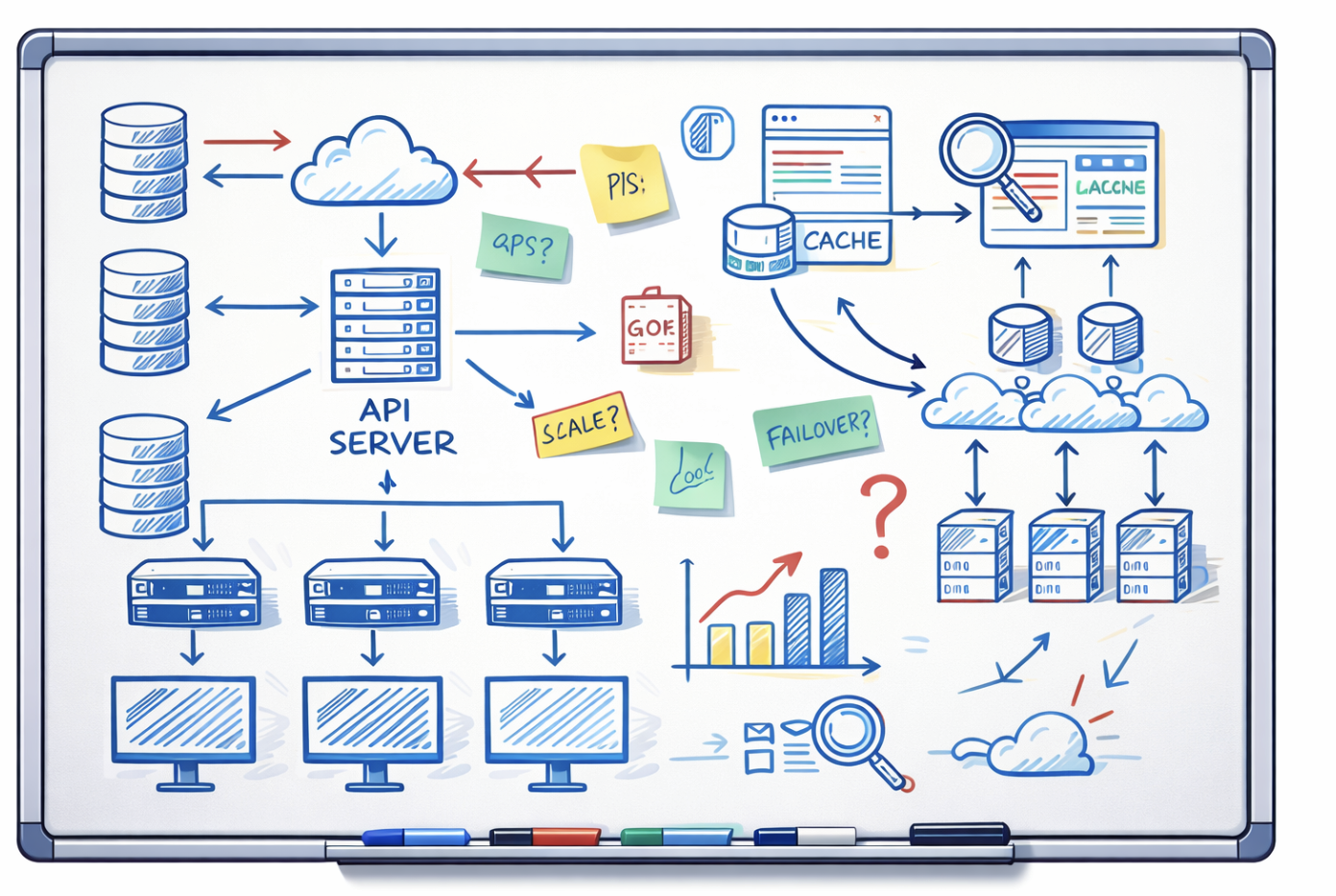

Fact: Complex System control planes become as complex as the systems they represent.

but it may just get a lot harder now.

Understand interactions between components, build awareness of unintended consequences.

Adapt to dynamic conditions, rebuild mental models.

Think critically, make trade-offs under pressure.

As a tool it vastly improves productivity and reduces mundane, repetitive tasks.

But as an Operator it threatens to enshittify your systems.

|

Understand the impacts on hiring and recruiting - the old signals are changing. |

|

Be clear on what we are cultivating and incentivizing with AI - and the impacts. |

|

Know distinctly on what the value of AI is, to your role, to your teams, to your business. |

|

What really matters is how we adapt to it, not how we fight it. |

Humans are, and always have been, the most adaptable components of any complex socio-technical system.

The ability to learn and build expertise in these systems is critical to their success and safety.

AI must be our partner, not a replacement.

| Accelerated Expertise — R. Hoffman, P. Ward, P. Feltovich (2013) |

| Five Minds for the Future — Howard Gardner (2005) |

| From Mechanistic to Systemic Thinking — Russell L. Ackoff (YouTube) |

| Ironies of Automation — L. Bainbridge (1983) | |

| How Complex Systems Fail — Richard I. Cook (2000) | |

| Knowing What We Know — Simon Winchester (2023) |

| Manufacturing Advantage — E. Appelbaum, T. Bailey, P. Berg (2000) |

| Rigor in AI — A. Olteanu, S. L. Blodgett et al. (2025) | |

| Talking about Machines — Julian E. Orr (1996) |

| The Principles of Scientific Management — Frederick Winslow Taylor (1911) |

| The Tyranny of Metrics — Jerry Z. Muller (2018) |

| The Unaccountability Machine — Dan Davies (2024) |